| AstroNuclPhysics ® Nuclear Physics - Astrophysics - Cosmology - Philosophy | Gravity, black holes and physics |

Chapter 1

GRAVITATION AND ITS PLACE IN PHYSICS

1.1. Nature,

space, gravity. Development of scientific knowledge.

1.2. Newton's law of gravitation

1.3. Mechanical

LeSage hypothesis of the nature of gravity

1.4. Analogy

between gravity and electrostatics

1.5. Electromagnetic

field. Maxwell's equations.

1.6. Four-dimensional

spacetime and special theory of relativity

1.1. Nature, space, gravity. Development of scientific knowledge.

Every thoughtful person will certainly be

intellectually and emotionally impressed by two phenomena :

=> The beauty and amazing diversity of the

surrounding nature. Inanimate - mountains,

rivers, waterfalls, clouds, lakes, seas, islands,... Living

nature - plants, forests, meadows, algae, mushrooms....;

animals - mammals, birds, reptiles, fish, insects. In enormous

diversity, including observation through a microscope. And at the

same time the realization that we are an integral part

of this nature, such a small evolutionary "protrusion".

=> Looking at the night sky gives us a

feeling of the mysterious depth of the vast Universe, hiding

unknown empires and certainly unknown phenomena. At the same

time, we can see with the naked eye only a tiny part of the

shining objects in the near universe. We can only convince

ourselves of the unimaginable expanse of the Universe through

astronomical observations using powerful telescopes. We realize

how tiny "our world" is compared to the infinitely vast

Universe..!..

Astronomical observations have shown

that the fundamental force governing the structure and dynamic

processes in the Universe is gravity. Gravitation

is a force with which every person is in direct and constant

contact from birth to life, in the form of Earth gravity

- weight (Greek gravis = heavy),

and humanity thus encounters gravitational

phenomena from the very beginning. Nevertheless (or perhaps

because of it), as with most basic natural phenomena, there have

been very misconception opinions about gravity for a very long

time, and we can say that the origin and essence of gravity is

not fully explained even now. In this introductory chapter, we

will very briefly summarize how views on gravity, space, time,

matter, universe, and nature in general, have gradually evolved

and refined from antiquity to the present day. And then we will

think about the general essence of nature and

the universe, about the secrets of our existence.

Science

in antiquity

The origins of science in antiquity arose from

entirely pragmatic motives: to systematically and correctly solve

the problems that life brought. Such specific problems were, for

example, the construction of cult buildings and the construction

of irrigation systems, the rational management and cultivation of

land, the distribution of food or other objects and their

exchange, and so on. To solve such tasks, it was necessary to

learn to determine distances, height differences and areas, to

study and predict the weather, to count and distribute goods in

terms of quality and quantity.

The needs of exchange and distribution led to the introduction of the basic arithmetic operations of addition, subtraction, multiplication and division. These operations, which are an expression of the properties of ordinary material objects, display (model) the actual processes with real bodies. Scales - units have been introduced to determine the distances and sizes of objects , ie certain standard objects, which can be used to express the sizes of other objects by comparison. In other words, the amount and sizes of real bodies were assigned numbers - their number and dimensions - which were operated according to the rules of arithmetic, and the resulting numbers were converted back to the corresponding number and size of real objects. Thus mathematics appeared in human activity as the science of models and a general scheme began to be used (initially subconsciously) :

reality ® Model ® reality

n

n

mathematics

Units of length, time,

mass

In the past, people chose basic mechanical units

for measuring distances, time and mass (amount of matter)

according to the characteristics of the environment in which they

lived and using objects they encountered. For length,

these were initially human measures such as "foot",

"elbow" or "inch". Later, when the shape and

approximate size of the Earth were already known, units of length

began to be derived from the dimensions of the Earth. At

the same time, in France, after 1790, efforts began to

systematize the previously diverse units. A metric system

of units was created based on the length unit 1 meter and its

decimal multiples. The name "meter"

etymologically comes from the ancient Greek word "métros"

(metros = length, size; to measure, to control). The scientific commission (whose

members included, for example, J.L.Lagrange and P.S.Laplace), in cooperation with surveyors, proposed that the basic

unit of length 1 meter be set as one ten-millionth of the Earth's

quadrant (1 kilometer then comes out as

1/40,000 of the length of the Earth's equator).

Note: There was

also an alternative proposal to declare 1 meter as the length of

the suspension of a precise pendulum, which would have an

oscillation period of 1 second. This proposal was ultimately not

implemented, its main disadvantage is the dependence of the

period on the geographical location of the pendulum on the Earth.

For measuring the enormous distances

of cosmic objects in space, the terrestrial units meter and

kilometer are too small and impractical. Three other

significantly larger units are used here :

-> The astronomical unit AU (Astronomical Unit) is equal to the mean distance of the Earth from the

Sun, which is 149,597,892 km, i.e. 1 AU = 1.49597892×1011 m.

-> Light year ly (light

year) is the distance that

light travels in 1 year. At the speed of light in a vacuum

(299,792,458 km/s) it is 1 ly = 9.461×1015 m = 63,241 AU. The light year is the most important

unit of length in the distant universe.

-> Parsec pc (parallax

second) is the distance

from which the mean distance of the Earth from the Sun (1 AU)

appears to subtend an angle of 1 arc second. A parsec is 30.86

trillion km - 1 pc = 3.086 ×1016 m = 206 254.8 AU = 3.26 ly. In astronomy, the parsec is

sometimes used, in astrophysics the unit light year prevails.

Time was derived

from the duration of one revolution of the Earth around the Sun -

"year", the period of the Moon's revolution around the

Earth - "month", the period of one rotation of the

Earth around its axis - "day"; this was divided into 24

"hours", an hour into 60 "minutes", a minute

into 60 "seconds". The name "minute" comes

from the Latin pars minuta - a reduced part of

an hour, "second" from pars minuta secunda -

the second reduced part .

Note: In earlier

times, the Earth's revolution around the Sun, the Moon's

revolution around the Earth, or the Earth's rotation around its

axis were unknown, so 1 day was the time between two consecutive

sunrises or sunsets, 1 month was the time between two full moons,

and 1 year was the total duration of the four seasons

(equinoxes).

The physical quantity mass

quantifies the amount of matter contained in a given body. It is

sometimes also called weight, but this is physically

misleading. The current basic unit of mass is 1 kilogram,

which comes from the weight of 1 liter of water, 1000 grams. The

name "gram" etymologically comes from

the Greek word "grámma" (gramma

= small weight; smallest unit of mass).

Multiples kilogram, ton (100 kg), milligram, microgram. In

everyday life, the mass of bodies is most often determined by weighing

using Earth's gravity. However, physically it expresses the

force-resistance of a body to acceleration according to Newton's

2nd law of mechanics.

At the end of the 19th century,

quantities and units for electromagnetism were

introduced (§1.5 "Electromagnetic

field. Maxwell's equations"), and by the middle of the 20th century for atomic,

nuclear and particle physics

(§1.1 "Atoms and atomic nuclei" and §1.5 "Elementary

particles and accelerators"

in the monograph "Nuclear physics and ionizing

radiation physics").

Even though today these units are

defined and metrologically measured much more precisely than

using the properties of the Earth, for historical reasons they

have remained in principle preserved. However, their virtually random

selection obscures some fundamental relationships in

natural laws, where complex constants occur, the

numerical size of which is given by the choice of units.

In §2.9 "Geometrodynamic system of units" the so-called geometrodynamic units will

be discussed, in which the basic natural constants take on unit

values. This greatly simplifies the writing of mathematical

formulas.

Astronomical observations.

Astrology, alchemy; quackery

Already in prehistoric times, people have observed that not only

day and night but also seasons recur periodically, and there is a

close connection between these daily and annual seasons and the

movement of the Sun, Moon and planets across the sky. The need to

determine and predict the time of day and season, ie natural

conditions for agricultural and other work, therefore naturally

led to astronomical observations and the

introduction of corresponding time units: day, month, year

(measuring time both short-term - hours and long-term - calendar)

. The Babylonians and Egyptians excelled in observing the sky in

ancient times.

The study of objects outside the

Earth - in space - is dealt with by astronomy (Greek astron = star,nomos = rule,

order, law; that is, the " laws of the stars

") *), popularly stargazing.

Although current astronomy includes the study of planetary

systems, interstellar matter, and the overall evolution of the

universe (cosmology),

stars and their systems (galaxies) are still the

main objects for astronomers.

*) Originally, it was a "regularity

of star motion", because the apparent shifts of stars

and constellations in the sky were considered star motion.

Nothing was known about the very nature of the stars in

antiquity and the Middle Ages; they were

considered a sort of unchanging distant points of light - perennials,

against the background of which the moon, planets, or the Sun,

moving. The true nature of the stars was gradually revealed only

during the 19th and 20th centuries - that they are very distant

huge hot gas spheres, in the interior of which thermonuclear

reactions take place (for most of their

evolution) ; The sun is also a star, they

are a kind of "distant sun". They are not immutable and

eternal, but during their evolution they shrink and expand, they

can even explode violently, "live" for a finite time -

details are in §4.1 "Gravity and evolution of stars".

Astronomy is

sometimes considered the "queen of science",

from two points of view :

a) It is the oldest natural Science.

Astronomical observations, records and calculations of the

positions of celestial bodies in the sky have been made since

ancient times. At that time, however, it was not considered a

natural science, because it was not known that earthly and

"heavenly" nature are one and the same.

b) Everything is part of the universe, and

our Earth is a cosmic body; everything around us was formed by

astrophysical processes in the universe (§4.1

"The role of gravity in the formation and

evolution of stars" and

§5.4 "Standard cosmological model. The Big Bang.

Shaping the structure of the universe."). In the concepts of unitary field theory,

contemporary fundamental physics tries to solve the problems of

the microworld of elementary particles and the megasworld,

the origin and evolution of the universe, on the same basis (Chapter B "Unitary field theory and

quantum gravity", §B.6

"Unification of fundamental interactions.

Supergravity. Superstrings.")

.

Ancient civilizations generally had

very poor and completely distorted

knowledge of the universe. No wonder - after all, we wouldn't be

better off if we just looked around the sky without optical

instruments and without prior knowledge. There are fundamental obstacles

in the immediate visual cognition of the universe :

1. Cosmic objects are very distant. The

optical system of our eye creates such small images of these

objects on the retina that it is impossible to distinguish the

details of their structure. Very little light

comes from distant objects in space , which due to the small

diameter of the eye lens (approx. 5 mm) is usually not enough to

see them even with a relatively high sensitivity of the retina.

2. We live on the planet Earth, which rotates

("cosmic carousel" ) and orbits the Sun. These movements of us observers

(which we are not aware of) give the false impression of the

movements of observed objects in space.

3. The Earth's atmosphere absorbs a lot

of light and our eye is sensitive only to a narrow region of the

electromagnetic radiation spectrum. Other important

"windows" into space are hidden from our sight.

4.Earth's gravity binds us tightly to the

earth and prevents me from "going to take a closer

look" at cosmic objects. After all, our close connection

with terrestrial living conditions and our "snail"

slowness in overcoming vast distances in space

also prevent us from doing so.

Astrology

The observed connections between periodic natural events and the

movement of celestial bodies, the causes of which ancient

observers did not know, led to the idea that the

positions and movements of celestial bodies are related even to

other phenomena on Earth *) - various catastrophes, wars and even

human destinies. From this false notion

developed astrology, which until the end of the

Middle Ages was the main motive for astronomical observations. Of

great importance to astrology are the uneven distribution of

observed stars, which create so-called constellations

- real or apparent figures that human imagination has

likened to various earthly objects, animals or themes from

mythology. We can thus encounter, for example, names such as the

constellations of the Great Bear (Ursa major), Capricorn

(Caprikornus), Swan (Cygnus), Dragon (Draco), Pegasus (Pegasus),

Bull (Taurus), Andromeda, ... and many others (there are more

than 80 in total). The stars in constellations often have nothing

in common, they have different brightness and are located at

different distances. They only seem to be projected onto

a common place in the sky and only then has our imagination

artificially attributed to them belonging to a certain formation.

However, astrology often attributes specific meanings to these

randomly created names.

However, already Copernicus' and Kepler's

findings about planetary movements made astrological claims

highly unlikely: individual planets, when viewed

from another planet - Earth - are randomly projected

into different constellations as they move.(also

randomly projected) in the starry sky;

there is no reason to attribute to these random projections any

real influence on the course of events. Indeed, since then,

educated people have mostly not believed in astrology; let us

remember only the words of J.A.Komenský: "Astrologers - they are not

astronomers, but liars from the stars!". Other scientific findings have confirmed this

view even more certainly. It is hard to imagine that the apparent

projections of sunlit planets on random constellation patterns in

the sky could somehow affect the complex structure of DNA

macromolecules inside homo sapiens germ cells on one of

the other planets orbiting the Sun! No distant cosmic bodies can

affect on the personality characteristics of people or

on their fate. So astrology is no longer a science,

but it can be a nice game using astronomical

props ...

* ) It is interesting that the ideas of

cosmic action are again encountered in modern physics in

connection with some interpretations of Mach's principle,

according to which local physical laws are determined by the

distribution and motion of all matter in the universe - see

"Appendix A". Of course, this has nothing to do with

astrological ideas!

It is similar with numerology.

Numbers fascinate many people, and some attribute magical powers

to them - that a particular number affects a certain

characteristic of a person. Astrology, numerology, chiromancy,

irodology, magic balls, card unloading ... etc., belong to the

same category of superstitious "oracle" or

"prophetic" techniques that do not work,

but many people believe them ...

Alchemy

Closely related to astrology was another false way *) to explore

nature - alchemy, which, based on some

metaphysical principles and philosophical ideas, sought to

achieve the transmutation of the elements **) - especially to

make gold - and to find a universal "sage stone". But

alchemists in their attempts (in terms of

the then required goals inevitably unsuccessful!) gathered a large amount of empirical knowledge, which

later, after abandonding alchemical misconceptions, have become

an important basis for understanding the true nature of the

chemical merging of substances - the basis for building of chemistry.

*) This critical evaluation applies only to science

page of alchemy and astrology! Some spiritual and philosophical

aspects, especially the effort for a unified conception

of being, for spiritual improvement,

the transformation for improvement, the unification of art and

science, were at a high level for their time and can still appeal

to us today. However, among current proponents of alchemy and

astrology, we often encounter misunderstandings

related to confusing and merging the misguided scientific ideas

of the past with valuable spiritual and philosophical ideas of

enduring validity.

**) However, alchemists had no idea not only about atoms and

their nuclei, but also did not recognize elements and compounds.

They judged the substances according to their external

manifestations and a few simple chemical reactions that they were

able to carry out. Today, with the methods of nuclear physics, we

can perform transmutations of elements - this is "Nuclear Alchemy".

Symbols

of the Sun, Moon and zodiac stars in astrology

Alchemical

laboratory

Charlatan

sells fraudulent medicines

Lambert

Doomer 1623-1700

From the turn of the

17th and 18th centuries, when feudalism and the church lost their

absolute power, the former alchemists - charlatan and often

fraudulent "gold-producers" in the service of the rich

and powerful - were gradually replaced by serious naturalists,

who no longer wanted a recipe for making gold or different

elixirs, but they tried to penetrate the essence of the

construction of matter. This eventually succeeded in

atomic and nuclear physics and chemistry (see Construction

of atoms).

Quackery versus science

For erroneous, untrustworthy or fraudulent schools of

thought and behavior of people is being called quackery

or charlatanism. According to literary sources,

the name "charlatan" comes from the Italian

word "ciarlatano", meaning the inhabitants of

the town of Cerreto, from where in the 15th century

several prominent magicians and healers came. In French, the word

"charlatan" began to be used for an

untrustworthy healer and impostor using unproven and medically

unrecognized practices. More generally for other talkers,

shaders, swindlers...

From the mental roots of astrology,

alchemy, and religion, are growing up some newer quacks

directions - a parapsychology denoting

itself by misleading name psychotronics, various

ideas about the aura, cosmic energy,

quantum consciousness, geopathogenic zones, megalytic legends, homeopathy,

alternative medicine. They are a frequent part of the line of

thought called the esotericist (cf. the passage "The

Meaning of Phenomena and Events"

in the essay "Science and Faith") . On this occasion, we will make a brief mention of

these worrying mistakes, and even frauds

, which, paradoxically, have become more and more common in

people's consciousness in recent decades.

Proponents of these ideas often

claim that our ancestors already knew in ancient times ("megalytic culture") and

used the mysterious "cosmic energy" and possessed

miraculous abilities. Today's science is accused of ignorance for

not acknowledging it... Modern charlatans equip old superstitious

ideas with new "scientific"

props, they boast the latest quantum,

holographic, relativistic physical theories - so that the lay

public can trust them. They talk without

knowing what it is, on quanta of energy, gravitons,

unified interactions, information fields, relativistic effects,

tachyons and superluminal velocities, and a number of other

concepts that, without further study and understanding, they

borrowed from the arsenal of valid theories of contemporary

science. They use computers and spread their phantasmagories with

the help of information technology ...

Most of the charlatan schools are

characterized by a misunderstanding of the way

they treat the concept of energy. There is talk

of mental, life, psychic, magnetic, cosmic, divine, etheric,

negative or positive energy, of energy zones. The physical

meaning of energy is erroneously confused with

the common folk notion of biological and mental

"energy", which is, in fact, a combinatorial and

biochemical property of the arrangement of complex molecules and

their systems in an organism; it has nothing to do with the

physical concept of energy. Not surprisingly, such confusion

often creates very bizarre nonsense . They talk

about different energy transmitters, receivers and interference

suppressors, zones, auras, "energetically active" water

and other substances, energy miraculous properties of pyramids

and other structures ...

No disguise into a modern, seemingly

scientific garb, can not change anything to the fact that all

these claims are only completely unsubstantiated

assumptions, dating from the pre-scientific period and

from misconceptions from the past. Now these

starting points have long been refuted. Nevertheless, many

trusting people, with underdeveloped critical thinking, continue

to believe in the wrong conclusions from them.

They fail to recognize that the apparent successes of alternative

medicine are due to the placebo effect, and the proclaimed

success of psychotronics is in fact only a selection

effect - from a probability of 50% to 50%, failed cases

are excluded and forgotten, while (randomly) successful cases are

glorified and widely published. Objective comparison and

confrontation (independent "blind" experiments with

subsequent statistical evaluation) is strongly opposed by

charlatans; they argue that "scientific supervision"

leads to the inhibition of their mental faculties, or disrupts

their association with the transcendent, cosmic energy, gods or

demons, and so on. If some such comparisons were still made (eg wells for finding water with dividing rot), the statistical significance of paranormal phenomena was

not proven.

Quackery and fraud

are most common in the field of health and disease, in medicine

-miraculous means and methods that can cure all

diseases, from cancer to AIDS. Whether they are pharmacological

means (universal "miracle drugs") or physical means

- various biolamps, magnets, generators of electromagnetic

frequencies or some mysterious fields, etc. The use of these

methods, as well as psychotronics and homeopathy in medicine, can

sometimes subjectively improve the health by the placebo

effect; or it may be a mere coincidence (even without treatment, the body would "help

itself")... However, in serious

diseases, their use carries the risk that there

will be a delay in waiting for the alleged

effects effective treatment, which may cause irreversible damage

to the patient's health.

Quantum

consciousness, quantum medicine

Concepts and knowledge of quantum physics are a

popular tool of fictional directions. This field of physics is

sufficiently mysterious (moreover, in

connection with the theory of relativity) and human

consciousness is also shrouded in mystery, so that in

the opinion of some people they should have something in common..?..

The common name "quantum consciousness"

or "quantum mind" means a group of

hypotheses proposing putative quantum-mechanical phenomena in the

function of the brain to explain thinking and consciousness.

Immediately after the creation of quantum mechanics in the works

of Planck, Bohr, Schrödinger, Heisenberg, Pauli and others (see eg "Quantum

Physics"), quantum

theory became an attractive option for solving the

philosophical-epistemic conflict between the

strict determinism of classical physics and our

conscious free will : quantum randomness

can open up new possibilities for free will. In most discussions

of the relationship between quantum physics and consciousness,

the basic ideas of quantum theory are accepted in a purely metaphorical

way, only as analogies, without a relevant reference to their

physical meaning. This can lead to interesting sci-fi ideas,

which are, however, unconvincing and scientifically erroneous.

Quantum-mechanical terms are often used to seek greater

persuasiveness - to add "scientific weight" to

subjective hypotheses.

The latest

hypothesis of this kind was created by R.Penrose *) and

S.Hameroff, who assumed cells or structures with "quantum

sensitivity" in the brain, through which quantum

mechanics is involved in brain activity. Hameroff

hypothesized that suitable structures for quantum behavior are microtubules

(for microtubules see eg "Cells

- basic units of living organisms", passage "Cytoskeleton -

skeleton and carrier of cell functions"),

which form the cytoskeleton of cells, including neurons, where

they could mediate synapses. Quantum vibrations could occur in

their regular lattices of tubulin protein, and coherent

superpositions of tubulin states could interfere with many

neurons. However, those skilled in the art of biochemistry,

molecular cell biology, and neurobiology are skeptical

of this microtubule function...

Simultaneous

quantum collapse of these microtubulin states is interpreted as

an "individual elementary act of consciousness".

Penrose attributes this act to "quantum gravity",

which has not yet been created. From the point of view of unitary

field theories, however, quantum gravity acts on much smaller

spatial scales than the dimensions of microtubule molecules, so

that it cannot affect any of their quantum

states at all.

More similar irrelevant constructions (such as

twistors), are being introduced here, probably to give more

"scientific weight" to these speculations...

The idea arose

that quantum information from microtubules is not lost after the

physical death of man, but they remain embedded in the

"information" structure of the universe as a kind of

"counter of consciousness"..?.. This is

already completely in the field of sci-fi..!.. The quantum

consciousness hypothesis is often (but

unjustifiably) linked to the hallucinatory experiences of

people who have undergone clinical death but have returned to

life. In fact, these frequently described typical experiences are

probably due to altered brain activity in a state of hypoxia

during circulatory arrest. The relationships between matter and

consciousness are briefly discussed in the passage "Soul and

Matter" of the work "The Anthropic Principle

or Cosmic God".

Despite

extensive treatises and great efforts by Penrose *) and Hameroff,

their hypothesis is not a convincing model of

brain function or explanation of the essence of consciousness and

mind. It is based on unverified and debatable assumptions

physical and neurobiological. Microtubules in cells probably do not

play a significant role in the function of neural networks in the

brain. It is apparently not necessary to use the

props of quantum mechanics to truly explain the human mind and

consciousness. Quantum physics works at the molecular and atomic

levels everywhere in nature, in our everyday life, but in the

behavior of macroscopic objects - which are also brain neurons

and their networks - quantum effects do not manifest

any way. Consciousness probably arises algorithmically -

combinatorially - from the enormous complexity of signal

connections between billions of neurons in the brain network...

*) Note: It

is difficult to understand, that this "flight-mistake"

happened to the excellent physicist Roger Penrose, who had

previously been very successful in research in the field of black

holes and cosmology. Together with S. Hawking, they developed

important theorems about spacetime horizons and singularities

(§3.8 "Hawking's and Penrose's theorems about singularities"). After all, this collaborator and his friend

Stephen Hawking flatly rejected the hypothesis

of "quantum consciousness"! The ways of the human mind

are unpredictable...

Other

fictitious claims of "quantum medicine"

are based on hypotheses about quantum behavior, according to

which the body is quantum composed of energy and information

influenced by quantum consciousness. Quantum healing can

therefore cure any disease based on a person's state of

mind..?.. Some charlatans even claim that they can heal

in this way even from a distance..!..

Rational approach to reports of miracles and

supernatural phenomena have already expressed the Scottish

philosopher David Hume (1711-1776) words: "No

testimony is not able to prove a miracle - it would have to be a

witness of this kind that the error of his would be more

miraculous than the event. which he is trying to witness". In other words, a mistake or a lie is more

likely than a miracle. This attitude is sometimes referred to as

"Hume's Razor", cutting off credible

information from improbable and perhaps erroneous information.

Within science itself, criteria called "Occam's razor"

and "Popper's razor" are used - see " "New" and

"Old" physics"and "Knowledge:

experience + science. Awareness

- education - wisdom." in the

monograph "Nuclear Physics and the Physics of Ionizing

Radiation".

All this would not be worth mentioning

in a physically oriented treatise, if these irrational

ideas did not spread more and more in recent years (and a lot of people believe them)..!..

For a comparison, see also the essay "Science

and Religion".

Space, time, matter, universe

In the empirical observation of nature and finding their laws,

important abstract concepts such as space, time,

motion, matter were formed , which are certain images of the

general (universal) properties of being. Efforts were made to put

individual isolated knowledge into context, to

generalize it and to extrapolate it. Questions like "When

and how did the world (universe) come into being?",

"How big is the universe?", "What is matter

composed of?" etc., they are probably as old as people's

conscious thinking about nature.

One of the basic questions that philosophy

asks is where to find the essence of all being -

the basic "primordial matter" (the simplest and

indecomposable primordial substance) from which all things,

plants, animals and people are formed and composed. Ancient

philosophy, which could not penetrate into the true essence of

things and phenomena, naively considered to be the essence of

some superficial and purely phenomenal aspects of reality, based

on immediate sensory sensations. Thus, water (Thales *) , air

(Anaximenes) or fire (Herakleitos) were considered the basic

precursors. Later, four basic (independent and non-intertwining)

primates were postulated, or elements (natural elements) from

which the whole world is built: water (as the essence of

liquids), air (as the representative of the gaseous state), the

earth (as the carrier of solid properties ) and fire (as a cause

of mobility and variability).

*) However, in the light of today's

knowledge, it can be said that Thales was not so far from the

truth: according to current astrophysics, all elements were

actually formed in stars by nuclear reactions from hydrogen

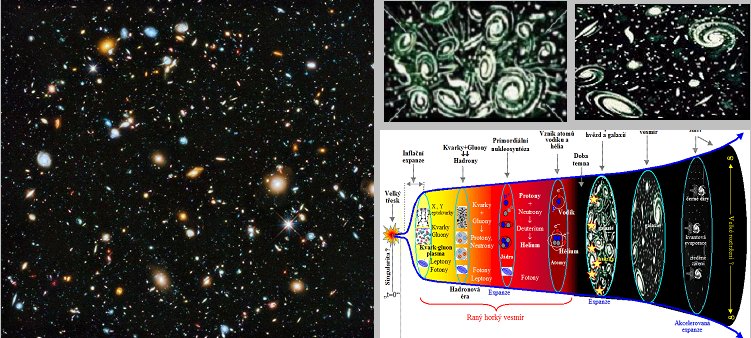

formed from elementary particles generated during the Big Bang (see §5.4 " Standard cosmological model.

The Big Bang. Shaping the structure of the universe. ") .

This teaching

on the four basic elements of the world was developed especially

by Aristotles (384-322 BC), who supplemented it with the concept

of four basic properties (essences), which are heat, cold,

humidity and dryness. The interconnection of such

"essences" was to create individual basic elements of

the world (eg water from moisture and cold, fire from heat and

drought). Similar ideas persisted until the Middle Ages, where

they were the basis of alchemy.

Philosophers have also addressed the

question of how matter is composed of the basic elements. As for

the structure of matter, there are basically two possibilities:

either matter is continuous (infinitely

divisible), or it consists of certain small "grains"

(atoms) that are no longer divisible. In ancient

times, it was not possible to make a reliable decision between

these two possibilities, so both concepts were coined in

different philosophical schools. The science of atoms was

developed mainly by Democritos (ca. 460-370 BC), who justified

the necessity of the existence of indivisible atoms by the fact

that, with unlimited divisibility, there would be nothing left

that could carry the properties of the substance. This

speculative reasoning is based on the assumption that the

properties of substances never change by division and that the

substance itself is the bearer of all its properties (current atomic and nuclear physics already looks at it

differently...) . The philosophical thesis

of the structure of matter is almost universally accepted in the

methodology of the natural sciences.

Gravity and the Universe in Antiquity

In the earliest times, before conscious exploration of nature

began, humans did not think about gravity at all

; it was so common and mundane that people got used to it and

ignored it. They took the gravity of the earth as a matter of

course and a natural effort of objects to fall to the ground.

Cognition of what we call gravity today was previously associated

with astronomy. Astronomy and philosophy in

general - all teaching about nature was part of philosophy at the

time - reached a particularly high level in the period of ancient

Greek culture. Some ancient philosophical schools (represented by Thales Miletsky, Pythagoras,

Aristarchus, in India by Arjabhata) at the

time had a surprisingly realistic picture of the shape, location

and motion of nearby planets (including the

Earth) around the Sun *).

*) Opinions on the true level of ancient

science sometimes differ. There are sensational claims about the

use of electricity and atomic energy and about knowledge of outer

space in ancient civilizations. However, these claims are

completely unfounded. The legacy of ancient thinkers is so rich

and extensive that among hundreds and thousands of ideas and

statements (often contradictory) one can find those that, more or

less by accident , coincide with the conclusions

of modern science. In these statements, we sometimes insert a

sense that their authors might be very surprised by ...

The

development of natural sciences, especially astronomy and the

overall worldview, for a long time significantly (and unfortunately mostly negatively)influenced the teachings of the most important

representative of Greek philosophy - Aristotle. This teaching was

the culmination of ancient natural philosophy. Aristotle came

from the basic sensory experience of earthly life, that heavy

bodies try to fall down to the ground, while "light"

objects like smoke or fire rise. Based on this, Aristotle

proclaimed the concept of " natural places "

and " natural movements": the natural place of

heavy substances (soil and water) is" to be below ",

the natural place of light substances (fire and air) is"

above ". The natural movement of earth and water is to

descend, the natural movement of air and fire is to ascend *).

All other movements are forced by an external force.

*) The philosophical thesis that

"the like goes to the like" was already expressed by

Platon, who thus explained the fact that material bodies fall to

the ground.

This concept,

together with the assumption that the Universe has only one

center of gravity, led Aristotle to the following image of the

world: in the center of the universe is a motionless

Earth, in which the heaviest matter of the universe

gathered - earth and water; the earth is composed of soil and

water located in its natural place, so it is at rest. The

universe (ie the Earth and its surroundings) consists of

individual spherical layers: earth, water, air, fire. All

celestial bodies are composed of the lightest and "most

perfect" substance - ether - and perform a

"perfect" uniform circular motion over some spheres

through which they are carried. Thus, in Aristotle's conception,

the universe is composed of two diametrically opposed parts:

earthly and celestial :

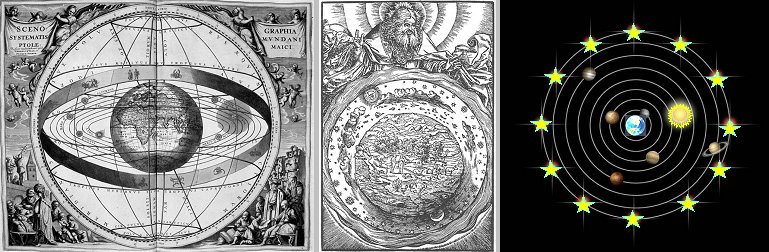

Geocetric

system - Earth is the center of universe God

directs the stars

movement Planets and stars

orbit the Earth

As for movementas

such, Aristotles taught that bodies move only when they are

propelled by some force - "the cart drawn by the donkey

stops, when the donkey stops towing". Aristotele did not

know inertia because he was not experimenting and could not

reduce or relieve friction enough. Regarding the fall of bodies

to the ground, Aristotle claimed that the rate of fall of a body

is proportional to its weight; this erroneous conclusion again

arose from the common experience that light sparse bodies fall

much slower than dense heavy bodies.

Aristotele's geocentric cosmology

further elaborated by Ptolemaios (ca. 100-160n.l.), who solved

the discrepancies between the assumed perfectly uniform motion

and the observed irregularities of the planets' motion together

with changes in their brightness (indicating changes in distances

between Earth and planets) by hypothesizing that real planetary

motions or more uniform circular movements, the so-called

deferent, epicycle and equant. Ptolemaios thus reached a

relatively good agreement with astronomical observations, but at

the cost of considerable complexity and sophistication.

Aristotle-Ptolemy's geocentric teaching was then canonized by the

Church - the movements of the planets and stars are controlled

by God (in the middle of the picture). These ideas remained as dogma throughout the

Middle Ages (the era of "intellectual

darkness"); the development of

astronomy and the natural sciences has thus been hampered for

more than a thousand years...

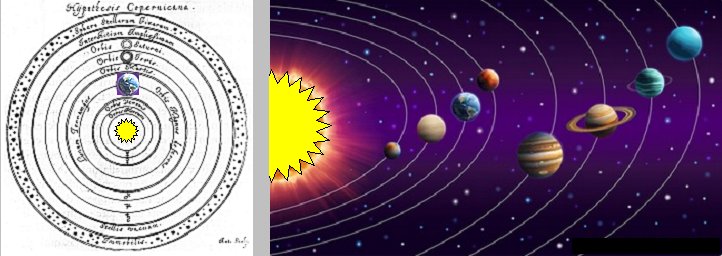

Development of scientific astronomy and physics

Heliocentric

system

The first significant breakthrough into the long-prevailing

misconception of the structure of the universe was made by M.

Copernicus (1473-1543), who noticed that the observed movements

of the Sun and planets could be explained much more simply and

naturally by assuming that the Sun is the stationary center of

the universe, around which the planets and the Earth orbited. He

thus created the heliocentric system and showed

that the Earth is just one of the other orbiting planets :

Heliocentric

system

The

Sun is center of the universe, with the planets, including Earth,

orbiting around it

In addition, the Earth revolves

around its axis with the diurnal period, giving the impression

that all cosmic bodies, stars and planets, orbit it. This laid

the foundation for eliminating the senseless contradiction

between "terrestrial" and "celestial" and for

bringing astronomy closer to other sciences, especially physics.

The knowledge that the Earth (and as it

turned out later, neither the solar system nor our Galaxy) has no any privileged place in the universe, it is

called the "Copernican principle" and plays an

important role in contemporary cosmology (Chapter

5, §5.1 "Basic starting points and

principles of cosmology

") .

Copernicus also realized that it was

probably not correct to assume only one center of gravity in the

universe, but that each body should have its own weight

- gravity. At Copernicus, we thus already encounter a hint of a

realistic conception of gravity as the effort of bodies and their

parts to join together into the whole, ie with a hint of the

concept of general gravity. Copernicus' conception was followed

by J.Bruno (infinity of the world in space

and time, the same nature of the perrenials-stars and the Sun) and especially J.Kepler (1571-1630), who, on the basis

of astronomical observations, formulated his three important laws

of planetary motion around the Sun (§1.2). Kepler

sensed that the cause of these planetary motions was a force

emanating from the Sun, but in the absence of mechanics he could

not come to a correct explanation; it was later filed by Newton.

Experiment

- the birth of scientific physics and natural science

Galileo Galilei (1564-1642), who can be considered the founder of

physics as a scientific discipline, made a decisive contribution

to the development of astronomy and physics. He introduced experiment

into physics as a decisive tool of knowledge. On the basis of

simple experiments with the motions of bodies, Galileo formulated

the law of inertia (which denied Aristotle's teaching on

motion), the composition of motions, and also arrived at the principle

of relativity of motion (see §1.2 and §1.6). He thus became

a pioneer in the mechanics of motion of bodies, especially

kinematics. In astronomy, Galileo was a staunch supporter of

Copernicus' heliocentric system, which he decisively supported

with his discoveries using telescopes.

Galileo was also the first scholar in

history to have made a direct and significant contribution to the

knowledge of gravitational phenomena. Through his experiments

with free-falling bodies (allegedly from the Leaning Tower of

Pisa), he arrived at the famous law

of free fall,

according to which in free fall all bodies fall to the ground

with a constant

acceleration,

which is independent of weight (mass) and composition of the body.

Hi thus refuted Aristotle's concept of natural movements upwards

or downwards: these are always the movements of bodies under the influence of

gravity, but in an environment with greater or lesser density.

The law of free fall, generalized to the principle of universality of gravitational action and the principle

of equivalence, has become one of the main starting

points of modern gravity physics - Einstein's general theory of

relativity (see Chapter 2, especially §2.2

"Universality - a basic property and the key to

understanding the nature of gravity").

Isaac Newton (1642-1727) was a decisive

milestone in the development of physics, astronomy, and the

natural sciences in general. Above all, Newton followed up on the Galileo's

findings and built mechanics, in which he formulated precisely and

mathematically expressed the three

basic laws of motion (§1.6, passage " Newton's classical mechanics "). He also discovered the basic laws of

hydrodynamics, acoustics and optics. Newton then completed his

epoch-making work by combining his and Galileo's mechanics of the

motion of terrestrial bodies with Kepler's kinematics of

planetary motion, thus arriving at his great law of general gravity and creating the dynamics of the

solar system; more on this in §1.2 "Newton's

law of gravitation".

Through the work of I. Newton,

the pre-scientific period of erroneous conjectures and dogmas

ended in human cognition, and a period of scientific

research,

precise experiments and logical thinking begins. Newtonian

physics also provided a new, more realistic view of the universe.

Observing

the universe with telescopes - telescopic astronomy

We know from everyday life that we do not see what is too far

away, we do not recognize the details and we can only guess at

the true nature of distant objects. This is even more true of

distant objects in universe. G.Galilei first looked into

space with a simple self-constructed telescope in 1610 and was surprised to see

craters on the Moon ("moon mountains"), Jupiter's

moons, the phases of Venus illumination, Saturn's rings. Larger

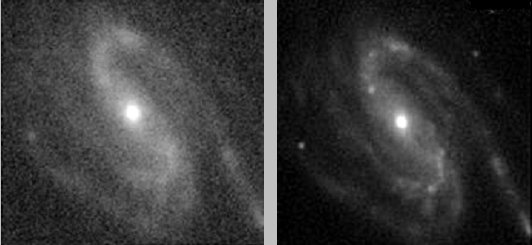

telescopes then revealed many stars invisible to the naked eye,

nebulae, new planets (Uranus r.1781, Neptune r.1846), spiral

"nebulae" - galaxies during the 19th century. New tools

placed in the foci of telescopes - photography and spectroscopy

- led to the

fascinating discoveries of previously unsuspected structures in

space, a huge number of stars and paved the way for astrophysics to study the physical properties of stars

and galaxies (see below "Electromagnetic radiation - a fundamental

source of information about the universe ").

Small binoculars are often lens

refractors - the primary lens consists of a

larger converging lens with a large focal length, the eyepiece

(used as a magnifying glass to observe the image created by the

lens) is a smaller converging or diverging lens with a short

focal length. The optical disadvantage of the refractor is a color

defect (chromatic aberration) - due to the dispersion of

light, the focal length is somewhat different for different

wavelengths - colors - light. In addition, it is technically

difficult to produce quality large diameter lenses. In 1668

I.Newton assembled the first mirror telescope -

a reflector, the "lens" of which was a concave mirror

creating the image in the focal plane by means of reflection of

light. The reflector has no color defect and, in addition,

large-diameter spherical or parabolic mirrors can be precisely

ground (their deformation is prevented by mechanical

reinforcement "from behind"). That's why all large

binoculars are mirrored. Large diameter telescope (aperture)

collects more light and therefore we can observe fainter and more

distant objects. In addition, larger telescopes have better

spatial resolution, so we can recognize finer details of the

structure of multiple stars, star clusters, spiral arms of

galaxies, gas clouds.

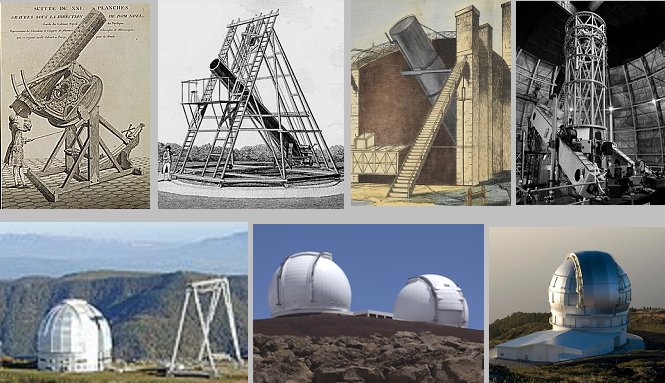

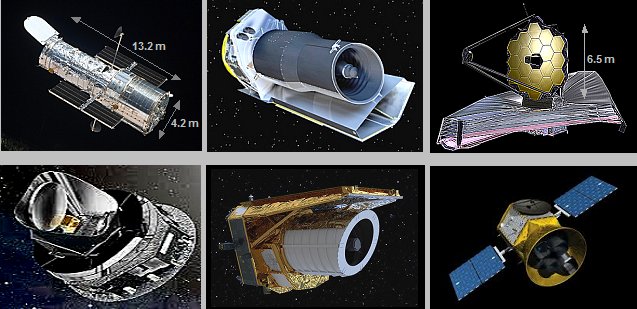

To illustrate the gradual

development, we will mention only a few important astronomical

telescopes :

-> The first were Galileo's

simple telescopes with lens diameters of 1.5, 2.5 and 3.8 cm.

-> Other simple hand-held

table telescopes were built by I.Newton (mirror diameter

3.3 cm), J.Hevelius (6 and 12 cm), R.Hooke (18 cm), Ch.Huygens

(19, 20, 22 cm), J.Short (38 and 46 cm), N.Noël (60 cm),

J.Michell (75 cm) and others. With exception to Newton's, there

were refractive - lens telescopes.

-> Relatively larger mirror-reflecting

astronomical telescopes were built by W.Parson (1.83 m in 1845)

and W.Hershel (1.26 m in 1815).

-> The 100-inch (2.54 m) Hooker

telescope built in 1917 at the Mount Wilson

Observatory in California was very important. It made fundamental

discoveries about galaxies, the expansion of the universe, dark

matter, and many details in the solar system and the distant

universe.

-> Next contemporary astronomical

telescopes have increased the size of their primary mirrors, e.g.

Hale to 5.1 m, BTA-6 to 6 meters, Keck to 10 m, GTC to 10.4 m.

| Nicolas Noël | Hershel 40-foot | Rose six-foot | 100-inch Hooker |

|

|||

| BTA-6 | Keck observatory | Gran Telescopio Canarias | |

Astrophotography. Earth

rotation correction. Pointers.

Visual observation through the eyepiece of a large astronomical

telescope allows us to see many details and a much larger number

of stars. However, this only applies to relatively closer and

brighter objects. From very distant stars, galaxies, nebulae, the

amount of light coming to us is so small that

even through a large primary mirror, the amount of photons will

not be sufficient for the sensitivity of the retina of our eye.

Our vision is the registration of "instantaneous images",

the retina and the visual center cannot accumulate photons over a

longer period of time (only about 1/15 of a

second - the inertia of the eye).

This fundamental limitation was

overcome only by the invention of photography -

a photochemical reaction - using silver halides in the

1830s. Layers of silver halides were then applied to glass

plates. When a light image created by a suitable optical system,

usually a connecting lens, is projected onto such a

photographic plate and left exposed for a certain period of time,

the photochemical reaction creates a latent image on the

photosensitive emulsion (formed from the halide by the released

silver atoms), which becomes permanently visible after

chemical development (in a

solution of menthol or hydroquinone) and

removal of the remaining halide (in sodium

sulfate solution). This process is commonly

used in classical photography of objects, landscapes, people,

animals, etc.; the exposure time here is usually very short,

about a tenth or hundredth of a second.

It is very important in astrophotography

of space objects in the night sky - planets, stars, nebulae,

galaxies. Photographic plates or films are placed in the focus of

an astronomical telescope, where the image of the focused object

is projected onto it. Exposure times can be very long, even

several hours in favorable lighting conditions. An image

accumulated from a large number of photons is thus created on the

photosensitive emulsion, which can make visible even very distant

and faint objects that we would not have a chance to see at all

during direct visual exposure. When an optical prism or grid is

placed in front of the photographic film, a photographic image of

the spectrum - spectral lines - of the light emitted by

the examined object is created. Photochemical imaging is now

replaced by electronic digital imaging using CCD

optoelectronic elements - digital astrophotography.

This leads to a substantial increase in the quantity and quality

of astronomical images of both the near and far universe. And it

allows astronomical telescopes to be placed in space, in orbit

around the Earth or the Sun, with long-distance radio

transmission of high-quality images for evaluation (as discussed below under "Space

Telescopes").

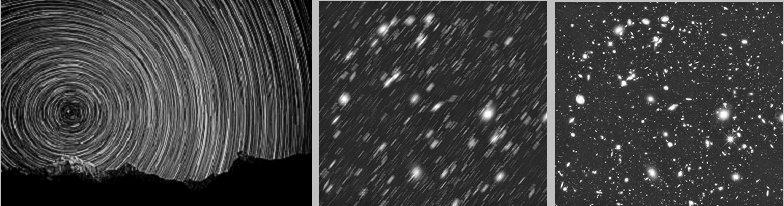

During long photographic exposures

on ground-based telescopes, the apparent movement

of the imaged objects is geometrically manifested, caused by the rotation

of the Earth (we are on a

"cosmic merry-go-round"). Instead

of a sharp point image, we get lines or arcs - motion blur

occurs. To obtain high-quality sharp images, it is necessary to mechanically

correct the effect of the Earth's rotation. The

telescope must be mechanically turned in a

synchronized manner in the direction of the Earth's rotation

using a "clockwork" or an electric motor (servomotor).

|

||

| All-night exposure of the starry sky Astromic image without correction Image with correction for Earth's rotation |

The synchronized movement of the telescope,

which coincides with the rotation of the sky, may sometimes not

be completely accurate - due to mechanical inaccuracies in the

assembly, displacements in the bearings, elasticity of materials,

irregularities in the movement of the mechanism, etc. For precise

astrophotography, the telescope is therefore often supplemented

with a parallel smaller aiming telescope, the so-called pointing

telescope, which follows a suitable brighter star

("pointing") in angular proximity to the photographed

object. Initially, pointing was performed manually, when the

astronomer watched the pointing star in the eyepiece with the

aiming cross in the middle throughout the exposure and corrected

the telescope's shift with gentle movements. Now it is performed

electronically using a camera installed in the pointing telescope

- the so-called autoguider, which evaluates deviations

of the pointing star from the center and sends signals to the

shifting servomotors for correction.

Newer ground-based telescopes are

installed in a rotating cupola. However, the

continued "relay-race" of more perfect imaging and

spectrometry of the most distant space objects is currently being

taken over by space telescopes located beyond

Earth, in near space - discussed below in the passage "Space

Telescopes".

Electrodynamics,

atomic physics, theory of relativity, quantum physics

In the middle of the 18th century, the development of mechanics

was seemingly completed. Fundamental physics focused on the study

of other physical phenomena - thermal and especially electrical and magnetic.

Electricity

and magnetism

At this point, it may be useful to briefly recapitulate the

development of knowledge about the extremely important natural

phenomena of electric and magnetic. The first

observation of electrical (electrostatic) phenomena comes

from ancient Greece. For articles made of amber, which is fossilized natural resin from

which jewelry and ornaments were made, at friction

were observed the

attraction of small light bodies - hair, feathers, yarn

(Thales Miletus in the 6th century BC described that the amber

tool used at spinning flax began to attract various small bodies,

while the flax fibers began to repel each other).

Amber is called the electron (electron) in Greek, which later gave a

collective name to all these phenomena (the

name elektricitas derived from amber was used by W.

Gilbert in the study of static electricity, although he observed

attractive forces at friction even in some other materials,

especially glass). For many centuries,

these phenomena served only as an curiosity interest and for

juggling demonstrations, nothing was known about their cause and

nature.

Only the existence of two kinds of electric charges (called conventionally positive

"+" and negative "-") has been discovered,

with charges of the same kind repelling each

other and of the

opposite kind attracting each

other. Later, the

law of conservation of electric

charge was discovered

(B.Franklin). In 1784, Ch.A.Coulomb, with the help of sensitive

torsional weights of his own construction, measured the interaction of

electric charges (Priestley and Robinson

dealt with these experiments independently) and discovered the basic law of

electrostatics - Coulomb's law (1.20b), similar to Newton's law

of gravitation (the comparison of the laws

of electrostatics and gravity is discussed in detail in §1.4

"Analogy between gravity and electrostatics").

In 1789, in his well-known

experiments with frog legs, Galvani observed muscle contraction

when touching an iron railing - indirectly observing the biological effects of discharging electric charges, ie

electric current (at that time there was

still a distinction between "galvanic" and frictional

electricity). In

1799 A. Volta first designed a source of "galvanic

current" - an electrochemical Volt

cell; this

current has been shown to be of the same nature as the

"discharge current" generated for a short time by the

conductive connection of electrostatically oppositely charged

bodies. Electrochemical sources - Volt cells assembled into batteries - made it possible to study the

continuous passage of an electric current through a conductors, to assemble the first electrical circuits.

Completely separately and

independently of electrical phenomena, other phenomena of

"mysterious" force action were observed - magnetic phenomena. It has been observed already in

ancient times, that some minerals attract or repel each

other and that they attract iron objects. Iron ore mined near the

city of Magnesia in Asia Minor was most well-known

in this respect; this ore (it is iron oxide Fe3O4)

was called magnetite, which gave the collective name

to magnetic phenomena. When placed on a cork float on water, or

when hung on a thread, this magnetic ore was always rotated in

the same direction - one end to the north and the other to the

south. Thus, two magnetic poles were marked out - north

and south; magnetic "arrows" found important use in compasses (the

Chinese used such a magnet 4,000 years ago to determine the

correct geographical direction when traveling). In

Europe, experiments with magnets were studied in detail around

1600 by the English physician W.Gilbert. As with electrical

phenomena, no one had any idea about the nature of magnetic

phenomena until the end of the 18th century

(the fluid idea vaguely spoke of northern and

southern "magnetic amounts", which, however, unlike

electric charges, cannot be separated from each other).

The first important breakthrough

into the essence of magnetic phenomena and their connection with

electrical phenomena began with the accidental discovery of

H.Ch.Oersted in 1820, who noticed during experiments with

electrical circuits, that the magnetic

needle deflects near the conductor through which the current

passes - that is, that the electric current causes a magnetic

field in the same way as if a permanent magnet had been applied

instead of the conductor with the electric current. It turned out

gradually that the mysterious magnetic action, which until then

was the domain of only natural substances, permanent magnets,

probably has an electrical origin - it is

created by the movement of electric charges. And

the magnetic field, in turn, exerts a force on the moving

charges, on the electric currents.

This was soon shown even more

certainly by the experiments of A.Ampere (1775-1889), who

discovered the law of mutual force (magnetic) action of electric

currents. In 1820, Biot and Savart measured the intensity of the

magnetic field around the conductor flowing through the el.

current, these results were further generalized by Laplace - the Biot-Savart-Laplace law (1.33a) was created, indicating

the dependence of the intensity of the magnetic field excited by

the current in the conductor element on the magnitude of the

current and on the distance. These laws led to the construction

of "artificial magnets" powered by electric current - electromagnets. The magnetism

of permanent magnets was later explained by atomistics.

Another key finding was the law of electromagnetic induction discovered in 1831 by M.Faraday,

according to which the time change of the magnetic field evokes

(induces) an electric field, whereas the induced voltage is

proportional to the rate of time change of the magnetic flux

through the surface of the considered conductor loop - relation

(1.37a). These findings became the basis not only of electrodynamics (the merger of the science of electricity

and magnetism), but also the practical application of

electromagnetic phenomena - electrical

engineering was created.

Faraday further expressed the

idea, based on his experiments, that the electric and magnetic

forces do not take place directly from one charge to another, but

spread through the environment lying between

them. He thus laid the foundations of the teaching about the electromagnetic field, which he further

elaborated, generalized and mathematically formulated by J.C.Maxwell

(1831-1879) in the 1860s. The theory of the electromagnetic

field led Maxwell to the knowledge of the finite

speed of

propagation of electromagnetic action equal to the speed of light

*), to the prediction of electromagnetic

waves and

to the hypothesis of the electromagnetic nature of light. The

experiments of H.Hertz and his followers, which proved the

existence of electromagnetic waves and found out some of their

properties, fully confirmed the correctness

of Maxwell's theory. From the physical-mathematical point of

view, the theory of the electromagnetic field is discussed in

§1.5 "Electromagnetic

field. Maxwell's equations".

The speed of light

- or the speed of propagation of electromagnetic waves, or the

speed of photons - is extraordinarily high

compared to all other terrestrial speeds (a million times the

speed of sound in air), so in earlier times it was not easy to

measure it more accurately (it was often considered infinite).

The first approximate determination was made astronomically in

1675 while observing the eclipse of Jupiter's moons (O.Roemer in1685, c~225 000 km/s).

But the real measurement of the

speed of light using terrestrial sources and optical-mechanical

means was not made by Fizeau until 1849. In this classic

experiment, a beam of light was reflected back and forth through

the teeth of a rotating gear when reflected from mirrors. As the

speed of the gear increased, it was observed that at a certain

speed the reflected beam no longer passed through the gear - the

beam that passes through the gap between the teeth of the gear

returns to the gear space after overcoming the mirror distance,

reflection and overcoming the distance back, when the wheel

rotates by such an angle, that instead of a gap there is already

a tooth in the path of the beam. If there is a distance between

the gear and the reflecting mirror d and a wheel rotating

at a frequency f has N teeth around the

circumference, between the speed of light c and the first

frequency f , when the reflected beam stops passing, a

simple relation c = 4.d.f.N (coefficient 4 arises from the fact

that the distance d is overcome twice and the rotation

time of the wheel from the gap to the tooth is 1/2.fN ). It

gets a result of c~313,000 km/s.

In 1850, J. Foucault used a rotating

mirror to determine the speed of light propagation. The emitted

light is reflected from the rotating mirror towards a stationary

mirror 18 meters away, and from it is reflected back to the

rotating mirror, which in the meantime has rotated through a

certain small angle. From the angle of rotation of the beam,

Foucault determined the speed of light to be 298,000 km/s. In

1879, A. Michelson refined the measurement using this method to a

value of c~299,909 km/s, and then in 1929 he reached a value of

299,798 km/s.

In other experiments, the

measurement of the speed of light was gradually further refined

using laser technology, and also when measuring with a laser

reflector on the Moon. The current value is c=299,792.458

km/second for vacuum.

Note

: In 1983,

metrologists at the 17th Congress on Weights and Measures

decided that the speed of light will be defined as a natural

constant of the exact value c=299,792,458 m/s.

And the meter will therefore be derived from the speed of light

in a vacuum: 1 meter is equal to the length of

the path traveled by light in a vacuum in 1/299,792,458 seconds.

Therefore, further refinement of the measurement of the speed of

light will no longer affect the value of c, but the exact

value of the distance of 1 meter.

In material environments, the speed of light - and in

general electromagnetic waves c´= 1/Öe.m - is slightly lower

than in a vacuum c = 1/Öe0.m0 , depending on the electrical permittivity e and magnetic

permeability m of the substance (it is analyzed

in §1.1, part "Electromagnetic and optical

properties of substances"

monograph "Nuclear physics and ionizing radiation

physics") and depends somewhat on

the wavelength of light (so-called dispersion ). Eg in

water the speed of light for red light is (rounded) 226 000 km/s,

for violet 223 000 km/s. It is even slower in crystals and

glass. Of all natural materials, the highest

refractive index is diamond (n = 2.42), in which the

speed of light is only 123,881 km/s - this leads to significant

optical effects of refraction and reflection of light in crystals

of diamond, which results in its aesthetic popularity as a piece

of jewelry.

The speed of light in a vacuum

does not depend on the speed of movement of the source.

Measurements of Michelson and Morley in 1881 to 1904

(measuring the speed of light in the direction and against the

direction of the Earth's motion) even showed that the speed of

light in a vacuum does not depend on the state of motion of the

source or observer - it is the same in all inertial systems, no

matter how fast they move . This fact, expressed

in the principle of constant speed of light,

became the basis of the special theory of relativity

(§1.6 "Four-dimensional

spacetime and special theory of relativity") and thus of the whole relativistic

physics.

Note: The

specific numerical value of the speed of light c is by no

means exceptional, it is basically given by units

selected for length [m] and time [s]. In §1.6 and many other

places in our explanation of the theory of relativity and

gravity, we will often use a system of units in which the speed

of light is c = 1.

The theoretical result of

electrodynamics and the special theory of relativity, that the

velocity of photons in a vacuum is exactly equal to c ,

holds for a plane unlimited wavefront of electromagnetic

radiation. However, if the radiation beam is narrowed in the

transverse direction (the wavefront is spatially limited),

quantum uncertainty relations begin to apply, leading, among

other things, to fluctuations in the velocity of

photons in vacuum (these subtle differences

are difficult to measure) .

How fast is gravity ?

- or how long does it take for a

change in the gravity of one body to take effect on another

(distant) body? We can put it simply by the example of our Earth,

which is bound in its orbit by the gravitational force of the

Sun. If, hypothetically, the Sun suddenly ceased to act its

attraction ("the devil would steal it at once"), the

motion of the Earth would change from an ellipse to a straight

line heading into distant space. But how long after the removal

of the Sun would this happen? Immediately? -

as assumed by the classical Newtonian idea of instantaneous

action at a distance, according to which gravity propagates infinitely

fast. Or in about 8 minutes, which is about the time it

takes for the sun's rays to fly to us? Or for some other end

time?

From the point of view of the speculative example outlined, this

is certainly an insignificant question. However, the value of the

speed of gravity has a fundamental influence on

phenomena in outer space - in astrophysics and cosmology

*). If the gravitational force acted at any distance immediately,

the evolution of the universe would proceed completely

differently than if gravity affected all material bodies with a

delay given by its finite velocity. We will outline this question

first at the end of §1.2, part "Modification of Newton's law of

gravitation", but mainly

in §2.5 "Einstein's equations of the gravitational field" and in

§2.7 "Gravitational waves", where

in the passage "How fast is gravity?" will be briefly discussed as well as general

questions propagation velocity changes in the gravitational field

and the possibility of its experimental determination. We will

see that the "speed of gravity" is the same as the

"rate of electromagnetism", ie changes in the

gravitational field spreads speed of light, like

a stir in the electromagnetic field .

*) In addition, perhaps in the multidimensional

"gateway" theory of superstrings

(§B.6 "Unification of fundamental interactions.

Supergravity. Superstrings.",

passage "Another dimension, M-theory,

11-dimensional theory of superstrings"), where

the assumed gravitational action between the gates cannot be

instant ..?..

Classical Faraday,

Ampere and Maxwell electrodynamics is a macroscopic and phenomenological theory - it perfectly describes

the properties of electric and magnetic fields in vacuum and in

material environments, their temporal changes and mutual

transformations. However, it does not take into account the

details of the structure of matter, the nature of its own and

basic "carriers" of electric and magnetic forces. The

first "microscopic" theory of electromagnetism was

developed in 1895 by H.A.Lorentz, but a full understanding

of the relationship between electromagnetism and the structure of

matter was made possible by the development of atomic and nuclear physics - see below.

Great stimulus for the development of physics

during the 19th century was the technical problems arising during

the Industrial Revolution. This created fundamental discoveries

that gave physics the character of a comprehensive

science .

Some methodological issues of the construction of

physics and its incorporation into other natural sciences, as

well as in the context of scientific knowledge in general, are

discussed in §1.0 "Physics -

fundamental natural science" monograph "Nuclear

physics and physics of ionizing radiation".

Microstructure of matter - atomic and

nuclear physics

At the turn of the 19th and 20th centuries, research into

electrical phenomena opened the door to an understanding of one

of the most basic unresolved issues - the structure and

composition of matter. And in turn, the discovery of the

basic building blocks of matter has made it possible to better

understand the nature and origin of electric forces.

When chemists (especially J.Dalton)

revived the idea of atoms at the end of the 19th

century, practically nothing was known about the nature and

structure of the atoms themselves. Faraday's experiments with

electrolysis in 1836 suggested that chemical fusion had much in

common with electrical phenomena. In 1895, J.J.Thomson, during

experiments with gas discharges, discovered an elementary

particle carrying a negative charge - an electron

and proposed the first idea of the atom ("pudding

model"). E.Rutheford along with Geiger and Marsden in 1911

made an important experiment with scattering particles

alpha which led to the discovery of the atomic

nucleus and created the planetary model of the

atom. In 1913, N.Bohr supplemented the planetary model

with three quantum postulates; the resulting Bohr model

of the atom is still used with certain modifications.

Atomic and nuclear physics has shown

that the origin of electric and magnetic forces

lies in the basic elementary particles that make up matter - electrons

and protons, which are carriers of negative and

positive electric charges. It also explains all electrical and

magnetic properties of substances, including the cause of the

magnetic properties of permanent magnets. Atomic physics further

explains the mechanical and optical properties of substances and

especially chemical fusion - the essence of

chemical fusion is the electrical attractive forces

between atoms, which, when sufficiently close to each

other, share part of the envelope electrons in the valence shell.

The structure of atoms and atomic nuclei is

discussed in more detail in §1.1 "Atoms and atomic nuclei" of the monograph "Nuclear

physics and physics of ionizing radiation". Research in the field of radioactivity

has played a crucial role in elucidating the properties of atoms

and atomic nuclei (discovered in 1896 by H.Becguerel) and ionizing

radiation - see §1.2 "Radioactivity" and §1.6 "Ionizing radiation" in the same treatise.

By applying laboratory knowledge of

atomic and nuclear physics to phenomena occurring in space, nuclear

astrophysics was created, which clarifies the origin of

radiation in space, evolution of stars, release of energy by

thermonuclear reactions inside stars, formation of elements by

cosmological and stellar nucleosynthesis (§4.1

"The role of gravity in origin and evolution stars"), dramatic events of

supernova explosions (§4.2 "Final

stages of stellar evolution. Gravitational collapse").

Living Nature - Biology

In parallel with physics, astronomy, chemistry and other sciences

of inanimate nature, it has been occurring since the 18th century

to significant discoveries in biology as well - the science of living

organisms. Earlier descriptive examination of external

manifestations and often random similarities was replaced by a

systematic examination of the structure, development, metabolism,

species classification and interrelationships of living

organisms. The basis of biology has become the science of the

structure and activity of the cell as the basic

building block of organisms (in more detail "Cells - the basic units of living organisms").

An important circumstance for a

correct understanding of life was the abandonment of so-called vitalism

- the assumption that complex "organic" substances are

created by the action of some specific "vital forces"

which are inherent only in living organisms (they are different

from the forces controlling inanimate nature). Careful